Introduction

Artificial Intelligence is evolving rapidly in 2026, and one of the biggest technologies driving this transformation is GPU-as-a-Service (GPUaaS). As AI models become larger and more complex, businesses need powerful computing resources to train, fine-tune, and deploy these systems efficiently. Instead of investing heavily in expensive physical hardware, organizations are now turning to cloud-based GPU infrastructure that provides high-performance computing on demand.

GPUaaS allows companies to access an advanced GPU, such as NVIDIA H100, H200, and Blackwell systems, through cloud platforms. This approach eliminates the need for building costly AI data centers while giving developers instant access to computing power. As a result, startups, enterprises, researchers, and AI developers can accelerate innovation without major upfront investments.

What Is GPU-as-a-Service (GPUaaS)?

GPU-as-a-Service is a cloud computing model where businesses rent GPU resources from infrastructure providers instead of purchasing and maintaining physical GPU servers. This GPU is optimized for AI workloads, including machine learning, deep learning, generative AI, computer vision, and large language models.

In 2026, GPUaaS platforms will provide flexible options ranging from single GPU instances to massive GPU clusters designed for enterprise-scale AI training.

Why GPUaaS Is Growing in 2026

The demand for AI applications has increased dramatically across industries, including health care, finance, e-commerce, robotics, and media. Training advanced AI models requires enormous computational power that traditional CPU-based systems cannot efficiently handle.

GPUaaS has become popular because it solves several challenges businesses face when building AI infrastructure.

Key Reasons Behind GPUaaS Growth

1. Rising Demand for Generative AI

Businesses are developing AI chat bots, image generation tools, AI assistants, and automation systems that require powerful GPU for training and inference.

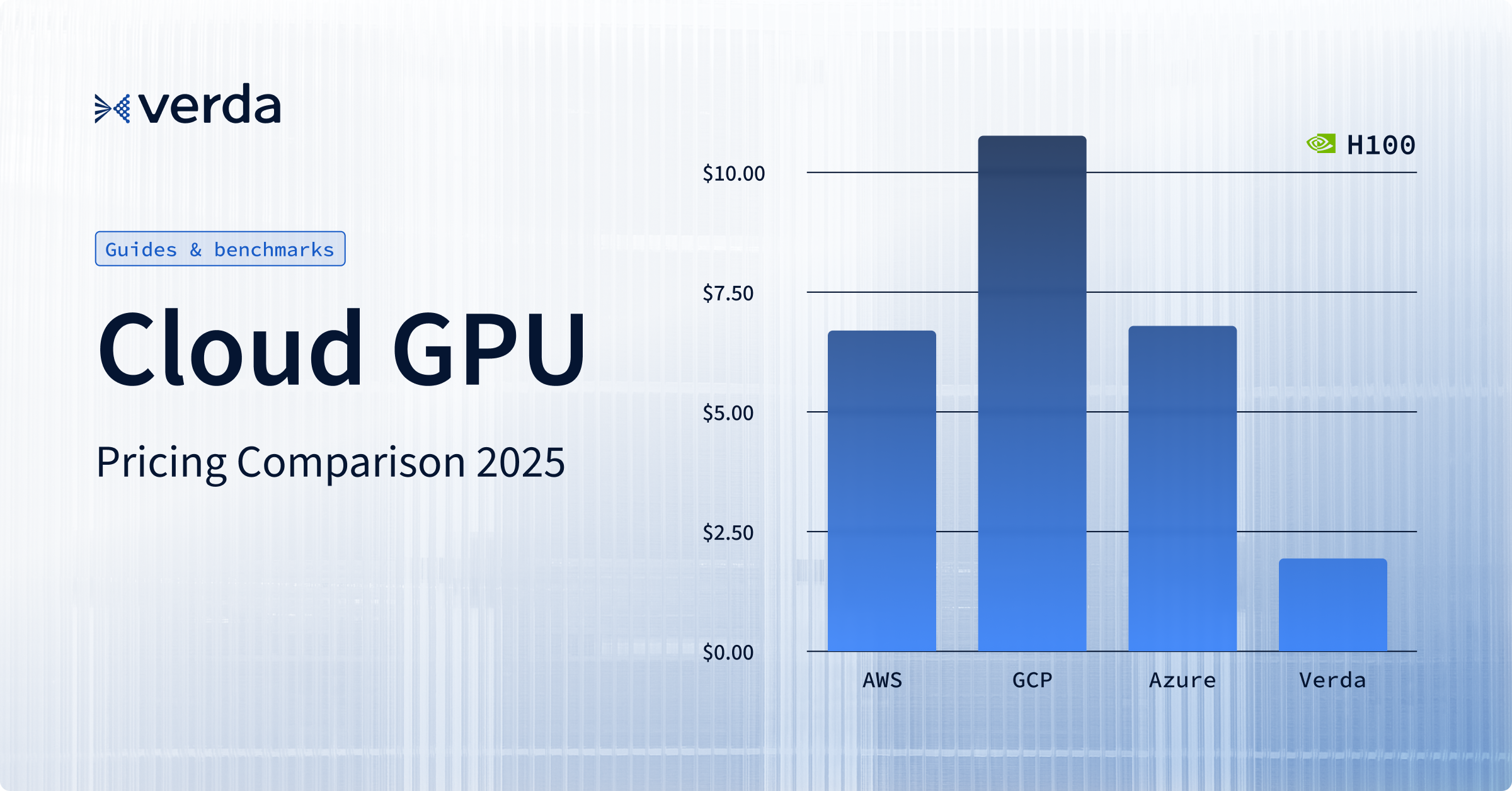

2. High Cost of AI Hardware

Purchasing an enterprise-grade GPU and maintaining AI infrastructure can cost millions. GPUaaS removes this financial barrier through pay-as-you-go pricing models.

3. Faster Deployment

Organizations can launch GPU clusters within minutes instead of waiting months for physical hardware installation and configuration.

4. Global Accessibility

Developers from different regions can access high-performance AI infrastructure remotely without setting up local data centers.

How GPUaaS Is Transforming AI Development

GPU-as-a-Service is changing how AI products are built, tested, and deployed. It allows businesses to scale AI operations faster while reducing operational complexity.

1. Faster AI Training

Modern AI models require billions of parameters and massive data sets. GPUaaS platforms provide high-performance clusters capable of training these models significantly faster than traditional systems.

With distributed GPU environments, developers can reduce training time from months to days, helping companies bring AI products to market more quickly.

2. Better Growth for AI Projects

Businesses can increase or reduce GPU usage based on workload requirements.

For example:

- Small startups can rent a few GPU for experimentation.

- Large enterprises can deploy thousands of GPU for enterprise AI training.

- AI teams can scale resources instantly during peak demand periods.

This flexibility improves efficiency while preventing unnecessary infrastructure costs.

Conclusion

In 2026, GPU-as-a-Service is no longer just a cloud solution—it has become the foundation of modern AI innovation. By making advanced computing power accessible and affordable, GPUaaS is helping businesses of all sizes compete in the rapidly evolving AI industry.